Searchable transcripts of the Post Office Horizon IT Inquiry hearings

The Post Office Horizon IT Inquiry was established in 2020, led by retired high court judge Sir Wyn Williams, to investigate the “failings which occurred with the Horizon IT system at the Post Office leading to the suspension, termination of subpostmasters’ contracts, prosecution and conviction of subpostmasters”.

I have made a site at https://postofficeinquiry.dracos.co.uk/ that takes a copy of the official transcripts, which are available in PDF or text format, and makes them available for display and searching. It also auto-links evidence IDs to the relevant page on the official site.

I hope this may be useful to people following the Inquiry, and to anyone who wants to know more about the “worst miscarriage of justice in British legal history”.

The rest of this page will explain the technical details of how it works, for those who may be interested.

Scraping (fetching the information from the official source)

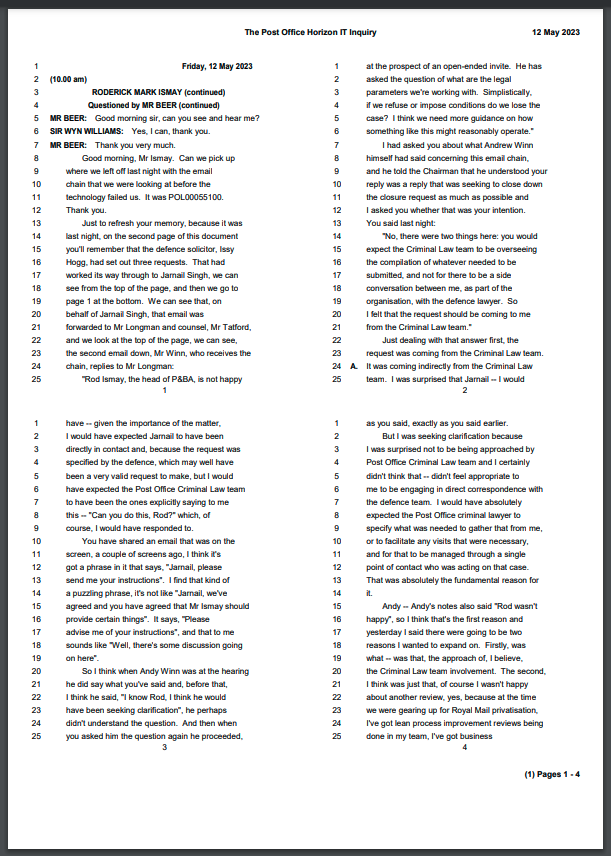

I noticed the Post Office inquiry transcript format was 25 numbered lines per page, which was basically the same format as the Leveson Inquiry for which I had written a scraper/parser some years ago for mySociety. So I dusted off the code for that and looked to see if it could be adapted here.

The code is in python, and this has two main libraries of use for fetching the data – requests, which fetches URLs, and Beautiful Soup, which parses HTML. Combining those two gave me a script that would visit the official hearings page (and later on, the evidence list page), loop through the list, locate the text file of the transcript and download it.

Thankfully the text files are linear, each page at a time, I did not have to use any 4-up code that had been needed for the Leveson Inquiry. At this point I now had a data directory full of text files.

Parsing (converting that information into a more useful format)

The main part of the parsing is taking the text line by line, and working out if something is a heading, a question, etc. But before that, the code strips off the line numbers, ignores the index, makes sure I haven’t missed a line, and then removes the maximum amount of left indentation it can find (this varies from page to page, file to file).

It then loops through the lines, and each line is one of a few things:

- empty (skip),

- the date (skip),

- a message in brackets (normally the time or a lunch break),

- the start of a two-line message about an adjournment or similar, also in brackets (so have to remember a bit of state here to spot the end of it on the next line),

- a question heading (“Questioned by FOO”) which I use to know who is speaking at each subsequent “Q”,

- a witness heading (“Ms FOO (affirmed)”, though sometimes it is a lawyer reading a statement summary), which I also use to know who is speaking at each subsequent “A”,

- a general heading (announcements, opening statements),

- a line starting Q or A (the start of a question or an answer), or

- a line starting with someone’s name in capitals (a new speaker).

If it is none of those, then if it is indented I assume it’s a new paragraph, if not I assume it’s the continuation of the current paragraph. This is enough to get a semantic structure for the whole document.

Once I have processed through all the files, I can then output that information however I want. I set up a nice URL structure using the phases of the inquiry, and I chose to output in reStructured Text format, because of where I had decided to store the information for display, which was Read the Docs. I committed the output to the same repository as the code, for ease of use (I could have put the output in a different branch, perhaps, but it was easier to see it all at once).

Uploading (putting the information somewhere it can be seen)

Read the Docs is a site mainly used for hosting documentation on python software. It does this by converting reStructured Text files to HTML using Sphinx, automatically including a nice clean template, navigation, a site search, and so on. This felt like a really good fit, even if this is not exactly normal software documentation. I really did not want to have to run my own site, search index, and so on.

I set up an account, paying to remove the ads, and set up GitHub so that it would send a webhook to Read the Docs on every push, telling it to compile a new version of the site from the generated files.

I had some teething issues with the tutorial, but found existing GitHub issues with solutions or workarounds, and after some trial and error had something up and running. I then spent quite a bit of time tweaking things, working out how tables of contents work so I did not have to update them with every new file, hiding irrelevant bits like Edit on GitHub and so on.

I am quite happy with the finished product :)

Updating (keeping the site up to date)

I had assumed I would run the update manually locally and push a new update when new transcripts were available. But GitHub lets you schedule actions to run, so with a bit more trial and error and streamlining and improving robustness, I ended up with a GitHub action that runs a few times a day, checking for new transcripts and parsing them if found. It automatically commits new data, and uses GitHub Action’s caching mechanism to not scrape/parse things it already has.

All the source code can be found at https://github.com/dracos/pohi.